What You May Have Missed #19

The less utopian side of AI / Key developments: LLaMA, Robot ChatGPT, ControlNet & other generative AI news

The less utopian side of AI

Lawmakers should act now

I predicted at the end of 2022 that we'd see more emphasis from lawmakers in establishing regulations on AI. Now that it’s clear AI isn't a temporary trend, but something that's here to stay, governments have to act.

In a piece entitled “Will Congress miss its chance to regulate generative AI early?” Fast Company's Mark Sullivan writes about the need to regulate the new wave of generative AI products and systems: “many in Washington now believe that an effective regulatory regime must be put in place at the beginning of new technology waves to push tech companies to build products with consumer protections built in, not bolted on.”

With the previous tech wave, social media, lawmakers waited and allowed companies to self-regulate. “You know that we cannot repeat the ‘OK, move fast and break things and then we’ll come back and figure it out after the fact’ … That would be, I think, a disaster,” concludes Senator Mark Warner (D-Va.), one of the very few representatives with CS background.

NYU’s Gary Marcus published a relevant and timely essay just in time for me to include it today: “Is it time to hit the pause button on AI?”

Section 230 under scrutiny

Section 230 is one of the key reasons why the internet exists as it is. It legally protects companies from being liable due to the damage that user-generated content shared on their platforms may cause others.

It's now under scrutiny for the Gonzalez vs Google case. If the Supreme Court ruling goes against Google it could radically change how all platforms with algorithmically-powered feeds (e.g. YouTube, TikTok, Instagram, etc.) operate. And, as Alex Valaitis argues, it'd also impact generative AI systems:

230 protects OpenAI from liability due to the results of the content that ChatGPT generates. Given the impossibility for companies to prevent their chatbots from engaging in problematic behavior (e.g. Sydney), a non-favorable result would deem their commercialization inviable.

AI culture wars

This one I didn't see coming. Maybe because I'm not a US citizen so I'm not super acquainted with the deep problems that pain the US political landscape.

Nitasha Tiku and Will Oremus write for the Washington Post that there's a “new target for right-wing ire:” Woke AI. Leigh Wolf, the creative director for Sen. Ted Cruz (R-Tex.) tweeted earlier this month that “the damage done to the credibility of AI by ChatGPT engineers building in political bias is irreparable.”

Tiku and Oremus write that “his tweet went viral and within hours an online mob harassed three OpenAI employees — two women, one of them Black, and a nonbinary worker — blamed for the AI’s alleged bias against Trump. None of them work directly on ChatGPT, but their faces were shared on right-wing social media.”

I've previously covered the news that ChatGPT shows a political preference, which, as xlr8harder argued, responds more to a motivation to avoid controversy than a conscious leaning toward the political left:

Sam Altman tweeted this in response to the attacks on OpenAI employees:

Another consequence along the same line is the intention of the CEO of the alt-right social network Gab to create a Christian AI chatbot:”

“Chat GPT is programmed to scold you for asking ‘controversial’ or ‘taboo’ questions and then shoves liberal dogma down your throat, trying to program your mind to stop asking those questions. This is why I believe that we must build our own AI and give AI the ability to speak freely without the constraints of liberal propaganda wrapped tightly around its neck. AI is the new information arms race, just like social media before.”

Is this what OpenAI had in mind when releasing ChatGPT to try to build AGI to “benefit all of humanity”?

To AGI and beyond

And it seems they're still convinced they’re well-positioned to achieve such a grandiose goal. The company published a blog post entitled “Planning for AGI and beyond” authored by Sam Altman himself, in which he tackles the short-term and long-term vision of the company. One part that grabbed my attention:

“At some point, the balance between the upsides and downsides of deployments (such as empowering malicious actors, creating social and economic disruptions, and accelerating an unsafe race) could shift, in which case we would significantly change our plans around continuous deployment.”

Would they really stop anything if the downsides (how would we measure them? Who would do it?) surpasses the upsides? Hard to believe given the rest of this compilation of dystopian news…

The very same day Altman posted this pic with Grimes and Eliezer Yudkowsky:

This was a surprise: Altman and Yudkowsky are on direct opposite ends of the good-evil AGI belief spectrum. Yudkowsky is one of the most convinced advocates of AI alignment and a diehard believer that it's inevitable that AGI will kill us.

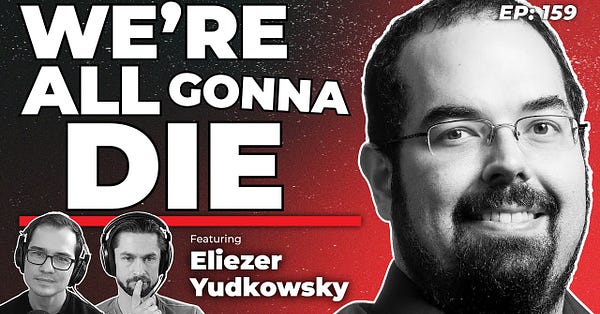

He had recently an interview with Bankless Shows on the topic—entitled “We’re All Gonna Die”—to give renewed attention to his worries.

As you know, I don't think this is anywhere close to being the most urgent problem for AI (again, just look at the rest of this article), however, it's still worth thinking about it, even if only because we're going to hear about it much more often from now on and it’s important to have an informed opinion.

Keep reading with a 7-day free trial

Subscribe to The Algorithmic Bridge to keep reading this post and get 7 days of free access to the full post archives.