Superintelligence: OpenAI Says We Have 10 Years to Prepare

A critical analysis of OpenAI's latest blog post, "Governance of superintelligence"

I wonder if there’s any other discipline where “experts” devote so much time to making predictions. AI is special—the only place where you can spend your days talking about the future even when it has already arrived.

OpenAI: A story of AGI and superintelligence

OpenAI was born in 2015, eight years ago, under a bold premise: we can—and will—build artificial general intelligence (AGI). And just as bold has been the determination of the startup’s founders, who have spent these eight years trying to architect the road toward that goal. They may have gone on an off-ramp, but maybe not. No one knows.

It’s funny because not only do AI people love making forecasts (me included), the field as a whole is unable to assess in hindsight whether those forecasts materialized or not. Is the transformer an AGI milestone? Is GPT the breakthrough we were waiting for? Is deep learning the ultimate AI paradigm? We should know by now but we don’t. The lack of consensus among experts is revealing evidence.

Anyway, OpenAI first mentioned AGI in its announcement post, published in December 2015 (they called it “human-level AI” back then), and then officialized it as a goal in the Charter, the company’s manifesto. In February this year, Sam Altman wrote “AGI and beyond” and revealed the company’s plans for a world post-AGI.

On Monday this week, Altman and his co-founders Greg Brockman and Ilya Sutskever went a step further and co-published a new blog post entitled “Governance of superintelligence,” where they define superintelligences as “AI systems dramatically more capable than even AGI” and claim that “now is a good time to start thinking about the governance of superintelligence.”

OpenAI using “superintelligence” (SI) so openly (pun intended) marks the closing of a cycle for them. In eight years, the company’s official blog has only mentioned this term on two occasions, both in 2023. From 2015 to 2022, no one dared say it. But in the last three months, Altman has gone from saying SI is about “the long term” to saying we should start to think about how to get there safely.

A post-AGI world isn’t a new thought for Altman, even though he has just publicly expressed his sentiment toward it. In February 2015, before starting OpenAI (and not long after Nick Bostrom popularized the concept), Altman wrote a two-part blog post on why we should fear and regulate SI (a must-read I should say if you want to understand his vision).

After reading them, it makes sense. Altman’s message is visionary, clairvoyant even. He was writing about SI eight years ago and now he has in his hands the future of the world—and the opportunity to implement all those crazy beliefs. The cycle is closing. OpenAI’s founders say we’re entering the final phase of this journey. The post they’ve just published echoes Altman’s words: We should be careful and afraid. The only way forward is regulation. There’s no going back. SI is inevitable.

But there’s another reading; like a self-fulfilling prophecy. Or the appearance of one. Let me ask you this: Do you think these three months of AI progress (or six, let’s be generous and include ChatGPT’s release) warrant this change of discourse?

Altman’s honest overconfidence

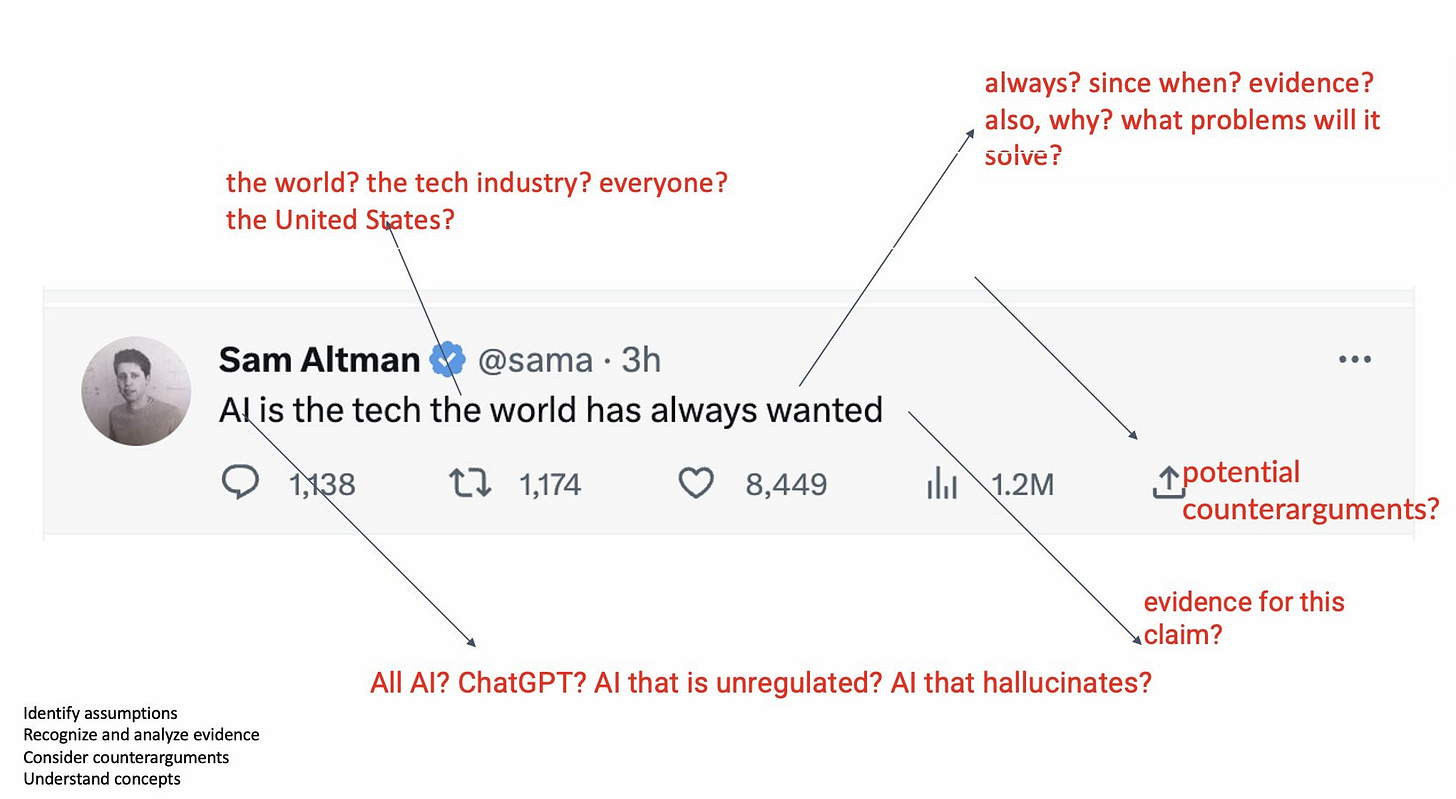

Sam Altman is sincere. The above tweet won’t resonate with many people (it doesn’t for me), but he says what he believes. Comparing the ideas he expressed in those 2015 blog posts with OpenAI’s principles reveals he’s been coherent throughout the years with his words and actions. When he had nothing at stake he already believed what he’s now trying to make a reality. It’s licit to disagree with him but it’s illogical to say he’s dishonest.

Altman is good at imprinting his vision into his writing, too. A kind of marketing genius. The problem with the above screenshot isn’t that he’s deceitful (at least not consciously) but that he’s overconfident given how little we know about the future of AI. Just as overconfident as he appears in those eight-year-old articles.

This overconfidence, which his co-founders share—like many intelligent people—also impregnates the SI article. It must feel great writing something that suggests you nailed a decade-old prediction. But Altman should be careful with trying to fulfill too early a prophecy he himself enunciated.

Here’s another version of the above tweet:

I love it because it prompts critical thinking without belittling Altman’s stance. It approaches his assertion without blind trust. That’s what I want to do with his new blog post “Governance of superintelligence.”

Let’s question the premises, the arguments, and why we’re talking about this at all. Let’s see what hides behind the appealing words. Behind the overconfidence. And behind this to-be-fulfilled prophecy of SI.

Dissecting ‘Governance of superintelligence’

Here’s the first paragraph:

“Given the picture as we see it now, it’s conceivable that within the next ten years, AI systems will exceed expert skill level in most domains, and carry out as much productive activity as one of today’s largest corporations.”

OpenAI has given a timeline. Ten years. Yet, there’s intentional ambiguity in whether the deadline refers to AGI or SI (or something else). Given that the post is all about the latter (they only mention AGI once and it’s to use it as a reference to define SI: “future AI systems dramatically more capable than even AGI”), it’d be acceptable for an outsider to assume the timeline refers to that. However, even by optimists’ standards, that’s too soon. AGI by 2033 seems a reasonable reading (not necessarily an accurate prediction, though).

By alluding to a concrete date—even if it refers to something different—the SI issue feels urgent. OpenAI is making it seem that we have 10 years before SI risks and dangers become uncontrollable (thus my headline, proving this trick makes the topic more attractive).

Next two paragraphs:

“In terms of both potential upsides and downsides, superintelligence will be more powerful than other technologies humanity has had to contend with in the past. We can have a dramatically more prosperous future; but we have to manage risk to get there. Given the possibility of existential risk, we can’t just be reactive. Nuclear energy is a commonly used historical example of a technology with this property; synthetic biology is another example.

We must mitigate the risks of today’s AI technology too, but superintelligence will require special treatment and coordination.”

Are we happy with their use of “superintelligence”? We shouldn’t. “AGI” does all the work here; “a superintelligence is beyond an AGI,” but it’s undefined. SI remains undefined by extension. They can’t define a SI (imaginary) so they don’t. They don’t understand it and they don’t know how to create one, but they don’t hesitate to claim it’ll be a tremendously powerful entity that entails (vast) “upsides and downsides.”

SI, whatever it is, will “require special treatment and coordination” to keep under control, so they suggest a model like the International Atomic Energy Agency, a historical precedent the world came up with to control atomic bomb proliferation and nuclear energy misuse. However, unlike nuclear weapons, SI is hypothetical. Can an existing model of international regulation contain a power that surpasses human ingenuity and intelligence by orders of magnitude? isn’t that like when my cat tries to hide from me under the couch but her tail is still visible? She can’t outsmart me. We can’t outsmart it.

As Altman wrote in another post in 2015, making predictions about tech is hard. Albert Einstein thought, Altman recalls, that “There is not the slightest indication that [nuclear energy] will ever be obtainable. It would mean that the atom would have to be shattered at will.” And he was wrong. Doesn’t this tendency to make mistaken predictions apply to Altman as well? It’s hard to come up with an adequate mechanism (agency or whatever) to regulate something that’s, as of now, science fiction (and may forever be, of course).

OpenAI leading trio proposes a three-part starting point to safely develop a SI:

“First, we need some degree of coordination among the leading development efforts to ensure that the development of superintelligence occurs in a manner that allows us to both maintain safety and help smooth integration of these systems with society … we could collectively agree … that the rate of growth in AI capability at the frontier is limited to a certain rate per year …

Second, we are likely to eventually need something like an IAEA for superintelligence efforts; any effort above a certain capability (or resources like compute) threshold will need to be subject to an international authority that can inspect systems, require audits, test for compliance with safety standards, place restrictions on degrees of deployment and levels of security, etc. …

Third, we need the technical capability to make a superintelligence safe. This is an open research question that we and others are putting a lot of effort into.”

Proposals that require “collective” coordination are doomed to fail. Simply because not everyone believes SI is a threat or even a possibility (no need to mention China). Limiting “the rate of growth in AI capability at the frontier” is nevertheless interesting if smaller efforts like open-source initiatives are left untouched, as they seem to propose; “We think it’s important to allow companies and open-source projects to develop models below a significant capability threshold.”

However, I wonder how much OpenAI believes this slowdown is a good policy given their rejection of the 6-month moratorium. Like them, I agree with the overhang hypothesis. The idea that stopping—or slowing down—AI development would ensure that once it’s resumed we’ll observe a sudden jump in AI capabilities because all the isolated components (e.g., chips, algorithms, training data) will improve in the meantime so that when they’re put back together, the resulting AI will unpredictably smarter. Greg Brockman used this idea to defend OpenAI’s incremental deployment approach during his April TED talk. Doesn’t that apply here?

About the regulatory agency, during the Senate hearing, prof. Gary Marcus proposed (again) a CERN-like project to coordinate all efforts on building superpowerful AI systems (not necessarily SI as he doesn’t believe we’re that near). It also reminds me of the island idea that Ian Hogart, co-author of the annual “State of AI Report,” defended in a lauded essay in The Financial Times.

Yet some have pointed out that existing regulatory organisms (like the FTC) can help and that “efforts to govern AI around the world” are ongoing. Of course, they’re not focused on SI but on current problems, but why would they? If you look closely it may seem that the possibility of a SI is the only reason a new agency—which OpenAI could influence—is necessary in the first place.

They end the blog post by saying that “Given the risks and difficulties, it’s worth considering why we are building this technology at all.” That’s exactly what Eliezer Yudkowsky is wondering:

“At OpenAI, we have two fundamental reasons. First, we believe it’s going to lead to a much better world than what we can imagine today …

Second, we believe it would be unintuitively risky and difficult to stop the creation of superintelligence. Because [among other things] … it’s inherently part of the technological path we are on…”

The first reason is ridiculous. SI is an imaginary thing so we can imagine that the resulting “economic growth and increase in quality of life will be astonishing.” A godlike tool can do godlike things. But is SI really the best way to approach the problems of the present? Climate change, inequality, geopolitical tensions… I’m really interested in any argument that could convince me that this tool will be the panacea for every sociopolitical problem we face today.

About the second reason, I’ll let Altman himself answer it. He ends his article on technology predictions with a joke disguised as a—possibly apocryphal—quote: “‘Superhuman machine intelligence is prima facie ridiculous.’ - Many otherwise smart people, 2015.”

I find it funny because his thesis—that making predictions about technology is hard—applies equally well to this other quote that reflects the brittle foundations on which he bases his premises and arguments about SI: “Superhuman machine intelligence is prima facie inevitable” - Sam Altman, probably, 2023.

Imagine that I built a car that can go 800mph. And I confidently predict that next year's model will achieve 1200mph. This may all sound quite impressive, until we realize that my "genius" invention ignores the fact that pretty much nobody can control a car at those speeds. That's what I see happening here, highly skilled technicians with a very limited understanding of the human condition, or even that much interest in it.

AI or SI has nowhere to get it's values but from us, and/or perhaps the larger world of nature. In either case, evolution rules the day, the strong dominate the weak, and survival of the fittest determines the outcome. If Altman's vision comes to pass, we humans will not be either the strongest or the fittest. Altman's vision might be compared to a tribe of chimps, who invent humans with the goal of using the humans to harvest more bananas. That story is unlikely to turn out the way the chimps had in mind.

Speaking of chimps, a compelling vision of the human condition can be found in the documentary Chimp Empire on Netflix. Perhaps the most credible way to predict the future is to study the past, and Chimp Empire gives us a four hour close up look at our very deep past. The relevance is that the similarity between our human behavior today and that of chimps is remarkable.

The point here is that the foundation of today's human behaviors was built millions of years before we were human. The fact that these ancient behaviors have survived to this day almost unchanged in any fundamental way reveals how deeply embedded they are in the human condition.

AI is not going to change any of these human behaviors, it will just amplify them. Some of that will be wonderful, and some of it horrific. When the horrific becomes large enough, it will erase the wonderful.

In April I was in San Francisco and heard Sam Altman speaking. It was at that time that it dawned on me on how much he and the people in the same tech bubble appear to have lost touch with reality.

I couldn't find a better example of Heidegger's Gestell: Tech has become so pervasive, that they can't think out of technical solutions anymore. Every problem needs an AI solution.

But does it?

Great article, Alberto.