Prompt Engineering Is Probably More Important Than You Think

And I know you know it's very important

Because you’re reading this, I assume you’re aware that knowing how to prompt generative AI systems well is critical to stay ahead. Even then, I’d be willing to guess (most of) you underestimate the importance it’ll take in the short-term future—as long as we don’t fall into an AI winter or unintendedly create a superhuman evil AI.

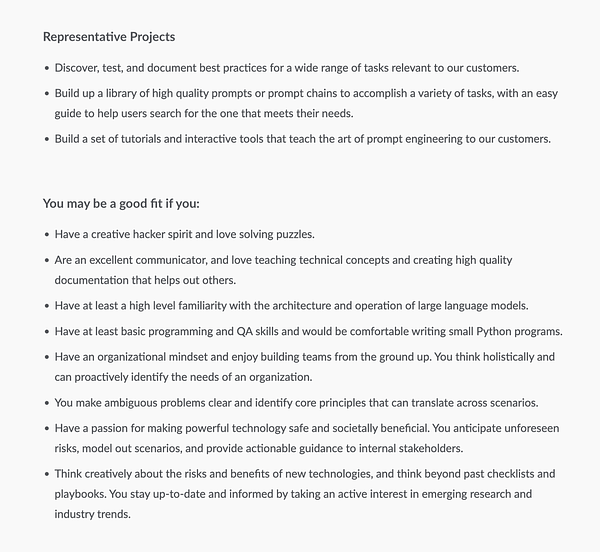

Here’s a hint, in case you missed it:

That’s the monetary value one of the top AI startups in the world ascribes to this skill (and it’s safe to say they’re among the best positioned to evaluate prompt engineering’s worthiness).

Let me tell you this: we’re early. We’re witnessing the emergence of a new wave of abilities that, as I’ll argue in this essay, will have huge value soon (now) and a profound impact on anyone that learns to master them.

I don’t think you need convincing for that. My goal is not to persuade you but to explain why prompt engineering is so important—why it’s valued at $300K/year. To achieve that, I’ll give you context and a framework to think about prompt engineering that you won’t find in mainstream media.

Prompt engineering is the skill of the future

I’ll start with a premise I think we all agree with: Directly-usable AI (e.g. generative tools) will become gradually ubiquitous to the point of being everywhere, like smartphones and social media (I add “directly usable” because other kinds of AI are already everywhere. Recommender systems power every feed-based high-tech app on your phone. However, contrary to generative AI, you can’t use them).

Generative AI isn’t at that level yet. ChatGPT was released barely two months ago and although it sent AI to the mainstream I’d say most people haven’t yet conceptualized what all this means. Now that giants like Microsoft and Google have decided to go all in on AI (even if executing it very poorly), AI will reach the zenith of consumer tech.

ChatGPT has been the first truly global breakthrough and an unprecedented success story, but it’s far from reaching the 1B+ users that other software products, like Microsoft Windows or Google Search, have. This isn’t to diminish its impressive run but to emphasize that we’re early. Once the tech matures, I’m confident it’ll be generally regarded as the third consumer tech revolution of the 21st century (the other two being the smartphone and social media).

Another idea where I think the consensus is total is that the UX that prompt engineering allows for is hardly replaceable—there’s no better user interface than natural language because that’s what we evolved to do best. Any other means of interacting with machines is suboptimal for us.

Since the invention of computers, very smart people have worked hard with the sole purpose to build increasingly intuitive abstractions, in the form of high-level programming languages, just to make it easier for us to interact with machines. On top of that, we have no-code tools, aka how most people interact with computers.

Between the machine code running on your computer’s CPU and the interface you clicked on to get from your desktop to this Substack post, there are many, many layers that increasingly trade off precision and speed for simplicity and intuitiveness as they get closer to the surface. That’s why no-code tools are awesome. They allow us to bypass the need to learn how machines work. We’re no longer forced to meet our silicon partners in the middle, which entails years of practice and deep CS knowledge.

But no-code tools, for now, limit substantially the degree to which non-programmers can interact with computers: The buttons you can click on and the switches you can activate are superficial. Communication is heavily limited. It’s like trying to talk to someone only using predefined sentences. A skilled coder, in contrast, has the ability to metaphorically perform surgery on software—they can go inside a computer (virtually) and achieve pretty much anything possible.

Programming languages—from the most abstract and precise to the most intuitive of all—and no-code tools have virtues and drawbacks. The framing I just described explains the historical importance of prompt engineering better—it’s the solution that takes the best of both worlds. And it is, for now, the best imaginable means we have to interact with AI systems. And soon, computers as a whole.

Current prompt engineering is quite primitive. There’s huge room for improvement and we’re only scratching the low end of a rich spectrum of possibilities. But we’re moving fast. Bing chat’s response to a given prompt is much richer and more accurate than GPT-3’s and, at the same time, harder prompts that GPT-3 struggled with are easily interpreted by Bing:

Traditional programming languages (even the higher-level ones like Python) require you to leave the comfort of human communication to meet the machine in the middle. No code tools are more rigid and allow for fewer degrees of freedom. Prompt engineering is how we bring the machine to us while retaining the flexibility of tweaking its guts at will. And, most importantly, it’s almost as intuitive as natural language.

I’m not saying it’s the perfect solution. Prompt engineering has shortcomings, too. For instance, prompting a language model to get something specific in return is akin to trying to understand the mechanisms that govern a black box. It sometimes resembles more an artist’s creative process than a standard engineering procedure. It’s hard, but we’re getting better collectively.

Let me summarize what we’ve got so far: First, generative AI is the consumer technology of the future, and second, prompt engineering (which takes the best about programming languages and no-code tools—and a bit of human language) is how we’ll optimize human-machine interactions.

The inevitable outcome is—and allow me the slight exaggeration here—that prompt engineering could be as important in the future as knowing how to speak English is today (all fellow non-native English speakers will agree that it’s quite a differentiating skill for us). In the end, not many people know how to conduct pancreatic surgery (aka programming) but we all know how to ask a stranger for directions. If AI systems ever achieve a generally accepted status of copilots—or even partners—those who speak their language will enjoy a comfortable place.

Prompt engineering, code, and natural language

Prompt engineering is quite special. I compared it with programming languages above, but it’s not a completely fair comparison. It’s also similar to natural language in some aspects but again the parallelisms aren’t perfect. Let me explain more in-depth how it differs from both and how it stands out.

Code is unambiguous, precise, and with perfect syntax. What differentiates one programming language from another is task/domain suitability and the level of abstraction, but that’s pretty much it (coders in the audience will kill me for this simplification). Anything you can do with a Turing complete programming language (all the well-known ones fall into this category, except HTML, sorry) you can do with all others. There’s only one possible meaning for a given string of code so there’s no room for interpretability. Quite the tool if you know how to use it.

But it’s hard for humans to understand (more so the more you walk down the ladder of abstraction). It’s also radically ill-equipped to express many things that, although irrelevant to computers, matter to us. In short: code is when we give up the quintessential aspects of humanity to communicate with computers.

Natural language is the opposite. Highly ambiguous, very dependent on context, imprecise, inaccurate, full of redundancy, and open to interpretability. We take advantage of the vast common ground all humans share when communicating with one another. It makes being super precise unnecessary (it saves resources, e.g., time and energy). But this is only useful as long as that overlapping is high. Once you leave it (e.g. you immerse in a different culture or country, talk about a highly technical topic with an expert when you aren’t, or try to express something with perfect precision) you begin to notice the flaws of natural language.

Prompt engineering is like code in that it allows communication with machines (or AI systems) while maintaining the flexibility and versatility of natural language. For the sake of simplicity and illustration, I’d say programming languages and natural languages are blobs of close-to-each-other points that belong in opposite extremes of the spectrum that is prompt engineering (if you allow me to use the concept with the broader meaning of “any sequence of language, written or otherwise, that elicits a response from an interlocutor, whether AI, computer, or human”).

Prompt engineering won’t be forever—but while it is, it’ll be the best way to talk to our alien silicon friends

Note that this definition of prompt engineering implies that, in its ideal form, it’d be comparable to talking to your best friend (that’s a stretch but bear with me).

Some people diminish the importance of prompt skills because better AI systems may deem them irrelevant (as in “we don’t need to learn a specific skill anymore because what we’re naturally born with suffices to communicate with AI”) but, if you think about it, even human communication requires practice, learning, and, needless to say, not everyone is equally good at it.

Sam Altman is one of the high-profile people who hold this view. He believes prompt engineering is “just” a phase in the goal of making machines understand human language naturally. In a 2022 interview with Reid Hoffman, founder of LinkedIn, he said, “I don’t think we’ll still be doing prompt engineering in five years.” I think he’s using here the term in a narrower way than I am (as I interpret it, he’s referring to the keywords and descriptors we use with DALL-E in contrast to the more fluent natural-language-like prompts that we use with ChatGPT).

But, even if he truly believes there won’t be any need to learn a skill in a few years (I’m not sure we can extend his statement that far), he still acknowledges the current importance of prompt engineering, as his (much more recent) tweet above suggests.

This perception that prompt engineering is merely a necessary middle step in our journey to something much better might be true ad infinitum (I agree with that). However, the stage during which prompt engineering (as I define it) is valuable enough to be a top skill but primitive enough to not be innately intuitive could take many years (certainly more than five). In the distant future, we may talk to machines like we talk to our neighbors but it’s safe to say that won’t happen anytime soon (I’m not even sure we’re going in the right direction anyway).

But let’s go with a conservative estimate (at least for some) and say that it’ll take us 30 years to create AI systems that can understand human language unambiguously enough so that there’s no longer a need to develop any prompting skills. During those 30 years, prompt engineering (in the whole spectrum of forms it may evolve through) will be the most useful and powerful method to communicate with AI systems and the only way we’ll have to access whatever it is that they have that’s analogous for “human internal mental states.”

You probably think systems like ChatGPT and Bing chat hide enormous complexity. I agree. We’ve barely scratched the surface of what they hide within. There are many recent examples that illustrate this point: the existence of Sydney, the DAN jailbreak, the SolidGoldMagikarp phenomenon, and, in case you had forgotten, Stable Diffusion’s monster, Loab, all suggest there’s a vast universe of unknowns—some of which we’ll unveil, some of which will forever remain in the land of mysteries.

There’s so much we don’t know about AI and the only tool we have to reveal its secrets—and thus explain and understand its behavior—is prompt engineering. Now imagine how you’ll feel in just 5 facing systems of orders of magnitude more powerful and inscrutable.

You may be thinking, “well, we do just fine getting along and understanding our fellow humans, who are much more complex in structure and function than current (and arguably future) AI systems.” (I have my doubts about the first part being true, but I’ll leave it at that.) Well, this is where the analogy with which I started this section breaks apart in a way that further reinforces my thesis.

You find it easy to interact and connect with humans because you’re also a human. The default overlap we all have in the way we think, feel, perceive, and understand the world, ourselves, and our surroundings is so great that the underlying complexity that powers our cognitive prowess is dominated by our familiarity.

In contrast to human-to-human communication, interacting with computers is unnatural. Computers are aliens to us. That’s why consciously learning prompt engineering is key—it won’t come to us as naturally as we’d like. People who don’t know this try to force the computer to adapt to what they know (human communication) and that’s precisely why they fail to get the results they want.

If you’ve tried exhaustively to get ChatGPT or Bing to do what you want, you know what I’m talking about. In some cases, their limitations arise (e.g. lack of commonsense reasoning) but in many others, it’s our inability to communicate adequately by leveraging the means at our disposal, which makes them seem dumb.

Prompt engineering will be, pretty much literally, the only means we’ll have to talk to these increasingly powerful aliens. And we don’t really know in which ways they’re aliens because we can’t directly ask—except with prompts.

To finish, let me clarify that, in contrast to what it may seem given the metaphors I’ve used throughout the essay, I don’t think these AI systems have intent, sentience, or a mind in the sense we humans do—and I don’t think they will anytime soon. Yet, I’m not sure it matters that much either.

Prompting language is definitely something inbetween programming and natural language. Pure natural language does NOT work well with AI.

The best example of this, is how stable diffusion prompting radically changed over time. It started with people trying to type natural language, the AI did 'understand' the subject matter, but the results were terrible looking.

Then came the Greg Rutkowski era, of cargo-culting prompts, which improved results somewhat.

Then came the big revolution of the NovelAI model. What makes it special, is it uses the danbooru tagging system (definitely NSFW), where an image is tagged via 20-50 1-2 word tags. The tags are not arbitrarily decided upon, but is consistently understood and enforced by the community, avoiding descriptions that don't map to any direct visual feature.

So the novelAI prompts look like this: Masterpiece, 1girl, long hair, red hair, dress, street.

There's no longer any human grammar involved. And the results work way better.

Since then, even the photo-style SD models, are trained from the NovelAI dataset, and use the tagging system originally developed for anime pictures.

What this suggests, is that pure natural language prompting may not be the end result of AI. AI will prefer a subset of natural language that is more precisely and consistently defined, with different grammar rules. That becomes the new prompting language.

This old novel called "A Working Theory of Love" is essentially about this guy in Silicon Valley hired by a Ray Kurzweil-esque figure to do lots of manual interaction with a chatbot; basically conversation-engineering, similar to prompt-engineering. Crazy to think this is now a real job instead of just fiction.