OpenAI Whisper Holds the Key to GPT-4

And 8 key features that make it the best ASR model (hey Siri, this one's for you)

Today I’m covering a subfield of AI I’d never thought I’d be writing about—mostly because it’s much more mature than the ones I usually write about (Large language models, AI art) and no breakthroughs were in sight. But I was mistaken. I’m referring to automatic speech recognition (ASR), as you surely have inferred from the headline... Bear with me because there are good reasons to read this news.

OpenAI announced Whisper a couple of days ago and people are already going crazy. Not because it’s a new concept or because of improvements in algorithm design. No, the reason is simpler: Whisper works better than any other commercial ASR system. Alexa, Siri, Google Assistant (these are the ones you’re probably familiar with), any of them will feel like last-century tech after you try Whisper. And you can. OpenAI, the company that tends to not do justice to its name, decided to open source this model. The digital experience is going to radically change for many people.

Let’s see if the tech rises to the buzz.

A brief overview of Whisper: What it is and does

OpenAI describes Whisper as a general-purpose end-to-end weakly supervised transformer-based ASR family of models. Let’s see what all this jargon means in simpler words.

“General-purpose” means that Whisper, in addition to the core task of speech recognition, can do all the peripheral tasks, like voice detection, language identification, transcription, and machine translation.

“End-to-end” means the models were trained seamlessly without human intervention or hand-made modules—in contrast to training the parts (for peripheral tasks) separately and then merging them.

“Weakly” means the threshold to accept audio data was lower than usual (lower-quality data can improve the model’s generalization capabilities).

“Supervised” means it’s trained on audio-text pairs, in contrast to previous models that are self-supervised (the reason for the change is in the dataset. More on this later).

“Transformer-based” you already know: It predicts the next element in the sequence by attending to previous elements. As a side note, OpenAI researchers chose the original transformer architecture instead of a fancier modern version because they didn’t want to make Whisper great through model improvements. They wanted to prove that high-quality supervised ASR is possible if enough data is available:

“Since the focus of our work is on studying the capabilities of large-scale supervised pre-training for speech recognition, we use an off-the-shelf architecture to avoid confounding our findings with model improvements.”

And it’s a “family” because Whisper has five versions. From smallest to largest in terms of parameters: Tiny (39M), base (74M), small (244M), medium (769M), and large (1.55B). You may find it surprising that they’re small compared to large language models (LLMs) like GPT-3 (175B) or LaMDA (137B). Making a good-enough generative language model is a next-level quest compared to other AI applications. From here on when I mention Whisper it’ll be Whisper large (model size matters for quality) unless stated otherwise.

Read more here: Blog (demos available), paper, GitHub repo (model card, Colab example, and code).

Okay, that was the most technical bit of the article. You don’t have to remember any details to understand the next sections. What I want to focus on now is what makes Whisper relevant to you and the AI community in general. I’ve read all the resources I linked above and I’ve compiled a list of what I consider to be Whisper’s eight key features (both at research and production levels).

Whisper in 8 keys: What makes it special

1. Whisper has super high accuracy

Even if it’s not always state-of-the-art (SOTA) in ASR benchmarks, Whisper matches human performance in real-world settings better than alternatives. From the paper:

“These results indicate that Whisper’s English ASR performance is not perfect but very close to human-level accuracy.”

This means that you’ll find Whisper better at understanding you than any other ASR system (as I said, Alexa or Siri are significantly worse if the conditions aren’t optimal). This extrapolates to real-world scenarios (e.g., with background noise, fast speech, or heavy accent. More on this later). People not affiliated with OpenAI who have tried the system report being impressed by the quality. For instance, Andrej Karpathy (ex-director of AI at Tesla) tweeted this earlier today:

2. Whisper is open source

OpenAI open-sourced Whisper under an MIT license. There’s a clear trend among AI companies to make the models and code available: OPT, BLOOM, Stable Diffusion... This practice improves the democratization of AI tools and, equally important, permits a better assessment of how they work, where they fail, and how they can be improved.

I’ve previously argued that open source is the way forward for AI and, when done with safety in mind, it creates a much stronger impact than AI under strict control and privacy. Companies like Google, which is falling behind in these types of practices (opening secondary tech vs. opening the best you have are very different things), will have a hard time leveraging the possibilities if they remain closed (DALL·E mini’s success over DALL·E is a lesson for every AI company that thinks great quality > openness).

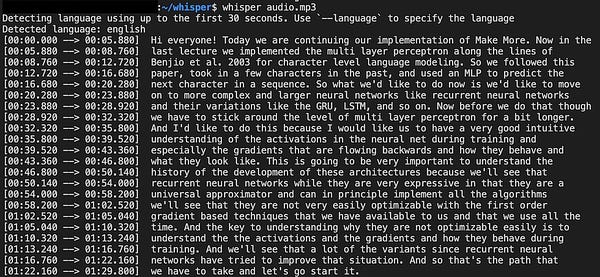

3. Whisper is easy to use

This goes hand in hand with it being open source. Hugging Face has already set up a demo you can use on your computer or smartphone. Data scientist Yuvi Sharma built a speech-text-speech system using Whisper (speech-to-text), BLOOM (text generation), and CoquiTTS (text-to-speech). You talk, Whisper recognizes and transcribes your speech, BLOOM generates text according to what you asked, and Coqui makes an audio file from it. All in less than 2 mins.

This reminds me of what has been happening with Stable Diffusion this month—developers are releasing tools and resources non-stop. And, luckily for us, most are no-code. This is the result of open-source policies. More accessibility for all.

For the coders out there: You can always download Whisper into your computer and play with the code. The largest model requires 10GB VRAM, so not for everybody. The smallest requires 1GB VRAM and is 32X faster, but notably worse quality. There are three versions in between.

4. Whisper is multilingual

There’s also a trend to make AI more inclusive. The English-speaking world is large, but 94% of the population doesn’t speak it as their first language—and 75% don’t speak it at all. Thousands of millions of people’s native languages had been forgotten until now. BLOOM is an LLM that works for up to 46 languages and pioneered multilingualism as a core feature of AI models. Meta AI released the project No language Left Behind (NLLB) in June. It aims to open-source AI models that can perform machine translation between 200 languages. OpenAI trained Whisper in 97 languages. The other 96 languages account for 17% of the dataset.

It’s still insufficient—people who speak languages that aren’t well represented in the data will experience reduced quality. And there’s a several-order-of-magnitude gap between languages that are widely represented on the internet like Chinese (23.5K hours on Whisper’s dataset) or Spanish (11.1K h) and others that aren’t like Hindi (12 h) or Bengali (1.3 h), despite being spoken by millions of people. Less common languages, although reasonably less present in the data, are still the first language for many people across the world—the people who would benefit the most from these types of technological advances.

5. Whisper can enhance many applications across industries

Regarding real-world uses, imagine that you had a turbocharged Siri or Alexa, not in terms of intelligence but in terms of being perfect at transcribing your speeches. You could take study notes with your voice without having to check them constantly. You could enjoy instant accurate subtitles in YouTube videos or movies. And companies will certainly embed Whisper into existing services. Alexa won’t fail to catch what you say anymore regardless of noise, accent, or speech cadence (if Amazon is smart).

Even more importantly, Whisper could signify a life quality improvement for people with speech or hearing impairment. For instance, you could instantly transcribe your words for a deaf person. It could be an improvement in inclusivity for consumer services. And all this without mentioning the uses for professional work: law, healthcare, banking, marketing, education… Virtually every industry could benefit from this and the use cases are nothing short of innumerable.

6. Whisper is multitasking

As explained in the beginning, Whisper does ASR but also all the tasks that surround the core problem. For instance, Whisper was also trained on X→ English translation (18% of the dataset) and was evaluated on language identification (performance similar to SOTA models). In general, this makes Whisper easier to integrate with existing products or services, and easier to deploy.

7. Whisper is robust and generalizes well

This is related to the high-accuracy point, but I think it’s worth emphasizing. One of the main limitations of existing ASR systems is that the underlying models are trained on specific datasets (or a combination of them) that have laboratory-type of quality. This means, the models do well when evaluated on target benchmarks but even slight deviations from that cause huge drops in quality.

Whisper was trained on a lot of data in a weakly supervised manner and that makes it more robust and capable of generalizing to out-of-distribution scenarios (out-of-distribution means, in simple words, that train and test data have different essential features). For instance, Whisper is better at understanding accents or fast speech, recognizing technical jargon and punctuation signs, and it’s better at detecting silence or removing background noise than alternatives.

8. Whisper’s training dataset is large, diverse—and private

It all looked too perfect so far. If you were expecting a “but” moment, here it is. Whisper has a dark side. For those of you who work on tech, this feature will instantly stand out. Let’s start with the objective part: Whisper was trained on 680K hours of audio-text data (that’s 77 years!) scraped from the web. This is an order of magnitude more than the largest existing supervised dataset on this type of data.

Somehow, this makes Whisper a breakthrough in audio-text data acquisition more than a breakthrough in algorithm design or scaling laws. OpenAI researchers got to prove that data scaling is very influential in the quality of the ASR system. This makes the dataset a key aspect of Whisper’s release. If it wasn’t for all the data OpenAI has gathered, Whisper wouldn’t exist.

Now, the shady part: The dataset is private. OpenAI decided to nod to their name by opening the inference models and the code but decided to keep the dataset closed. Why? I don’t know. And no one knows where the data came from. They explain in the paper that machine-generated data often lack sufficient quality so they came up with some heuristics to remove it. But, did they remove it all? If it’s not from an AI source, where did they find 10X human-labeled data? A lot of open questions that OpenAI doesn’t seem to be willing to address.

This means that developers and laypeople can still benefit from Whisper, but university researchers can’t analyze the data provenance or the potential biases within (here’s a good thread on this) and other tech companies can’t build on OpenAI’s findings—to maybe building and open source an improved version.

More intriguing is the question of why would they keep it closed. They obviously don’t plan to profit from Whisper (directly). I’ll let you think about this because I think there’s more to what meets the eye. I don’t have answers, only speculation.

So, let’s speculate. This is where the fun begins.

Beyond Whisper: A crazy hypothesis about OpenAI’s future

Why would OpenAI want to keep Whisper’s training set private? I haven’t been able to find any reliable source that knows the answer. There are many possible (boring) hypotheses. For instance, OpenAI doesn’t want the dataset to be available to competitors because they plan to leverage it for profit in some way. Another option is that there are legal issues in place (web scraping can be tricky) and they prefer to remain silent. Another option is simply that it’s their modus operandi and they didn’t see a reason to change it now—despite their apparent shift toward openness.

But, there’s a more exciting possibility—and quite plausible if you ask me. This hypothesis was shared on Twitter by Ethan Caballero, an ML Ph.D. student at the Mila-Quebec AI institute:

Okay, let me explain.

First, GPT-4. It’s the next version for the GPT family. GPT was published in 2018. GPT-2 in 2019. And GPT-3 in 2020. It was expected that GPT-4 would be released in 2021, but OpenAI focused on AI art (CLIP and DALL·E). Now, after two years of waiting, the release date feels very close. Earlier this year, in April, I wrote an article on GPT-4, entitled “GPT-4 Is Coming Soon. Here’s What We Know About It” (if you go to Google Search and type “gpt-4” it’s still the number one result, which makes me proud!) Anyway, I predicted back then that GPT-4 would be released in July or August. The sudden interest in AI art probably slowed it down. I can’t know for sure, but I’d be willing to bet that we’ll have GPT-4 in the next couple of months—reasons below.

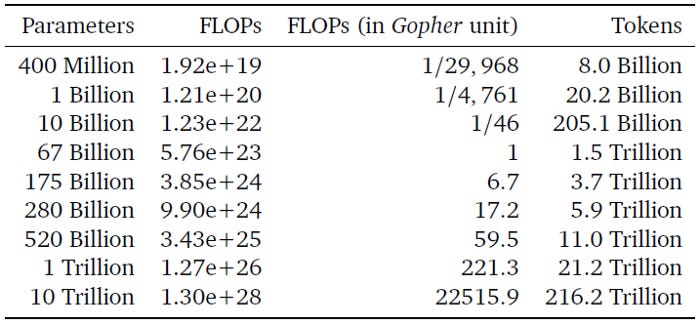

In that same article, I reviewed the most significant advancements the AI community had embraced since 2020 in the field of LLMs and matched them with the little information we had on GPT-4 (Sam Altman, OpenAI’s CEO, conducted a private Q&A in late 2021). One of the points I highlighted was that GPT-4 wouldn’t be larger than GPT-3, but it’d be much more optimized in terms of computing resources—and a notable leap in performance capabilities. For that, OpenAI would need much more data.

Here’s where DeepMind’s Chinchilla comes into play. DeepMind found that the generally accepted scaling laws (how to make models more performant by enlarging some feature; compute, data, or size) were incomplete. Making the models bigger was only half the story to make them more performant.

The other half? Data. DeepMind researchers found that they could build an LLM “compute-optimal” if they used more training data while keeping the number of parameters fixed. They proved their hypothesis right with Chinchilla, a 70B-parameter model that reached instant SOTA status, surpassing by a significant margin LaMDA (137B), GPT-3 (175B), J1-Jumbo (178B), Gopher (280B), and MT-NLG (530B). They found all those models were undertrained. Chinchilla had gotten the most out of the training data given its size.

Here’s when we circle back to Caballero’s hypothesis: To train a GPT-3-size model as compute-optimal, OpenAI needs much more data than what DeepMind needed to train the 70B-parameter Chinchilla. Caballero’s guess? OpenAI will use Whisper to transcribe speech data from the internet and generate all the text data they’re lacking to train GPT-4 as compute-optimal.

I predicted back in April that OpenAI would dismiss the older scaling laws and implement DeepMind’s findings onto GPT-4. If Caballero’s hypothesis is true, my prediction will turn out correct.

But, is it possible? A commenter on Caballero’s thread estimated that just YouTube’s audio data amounts to around 12 trillion tokens. That’s more than enough to train a compute-optimal GPT-3-size GPT-4 (~3.7T tokens) and even models the size of MT-NLG or PaLM (~11T tokens). Of course, they’ll also need more computing power, but given that Microsoft backs OpenAI, they won’t have any problem with that.

What can we expect if OpenAI pulls this off? As popular tech blogger Robert Scoble said in a Tweet last month, we can expect GPT-4 to be “just as exciting a Leap as GPT-3 was. Insane.” If that ends up being true, I can’t even begin to imagine the possibilities. Also, he implicitly said that GPT-4 is almost ready. Whisper may just be the last link of the chain. My release date predictions were off, but possibly not by a long shot.